参考博客: https://www.cnblogs.com/llongst/p/9608886.html

材料包所在地(实验系统):

IP:192.168.30.9

账号:root

密码:123456

大小18.5G

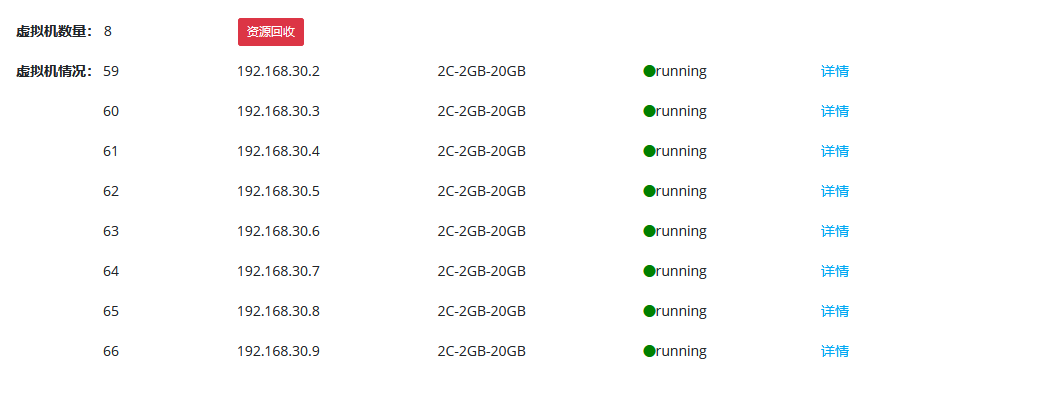

虚拟机资源

组织、域名、ip匹配表

域名

ip地址

组织

内网ip

orderer0.example.com

192.168.30.2

org3

123

kafka0

zookeeper0

orderer1.example.com

192.168.30.3

org3

124

kafka1

zookeeper1

orderer2.example.com

192.168.30.4

org3

125

kafka2

zookeeper2

kafka3

192.168.30.5

org3

126

peer0.org1.example.com

192.168.30.6

org1

127

peer1.org2.example.com

192.168.30.7

org1

128

peer0.org1.example.com

192.168.30.8

org2

129

peer1.org2.example.com

192.168.30.9

org2

130

网络拓扑

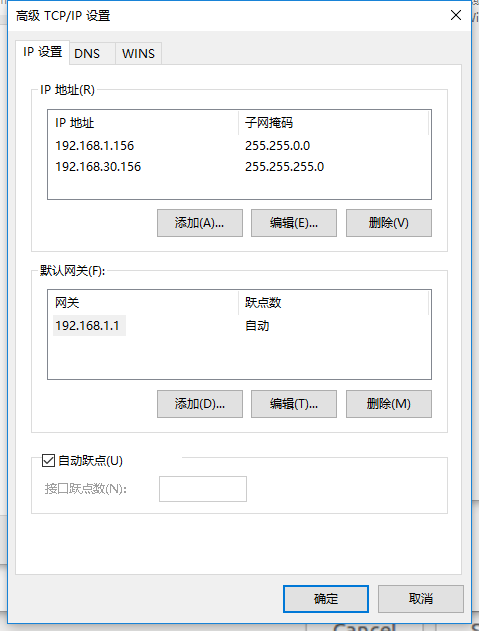

配置这些虚拟机 由于虚拟机在不和本电脑在一个网段,若要通信需要配一个静态的本地IP(192.168.1.x)和一个虚拟机所在网段的IP地址(192.168.30.x)。

ip配置如下。

192.168.1.156(路由器下的ip,供上网使用)

192.168.30.156(192.168.30.x,供连接虚拟机使用)

注意保存。

win10下打开命令行,ping 一下虚拟机的ip测试连接情况。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 C:\Users\cups>ping 192.168.30.2 正在 Ping 192.168.30.2 具有 32 字节的数据: 来自 192.168.30.2 的回复: 字节=32 时间<1ms TTL=64 来自 192.168.30.2 的回复: 字节=32 时间<1ms TTL=64 来自 192.168.30.2 的回复: 字节=32 时间<1ms TTL=64 192.168.30.2 的 Ping 统计信息: 数据包: 已发送 = 3,已接收 = 3,丢失 = 0 (0% 丢失), 往返行程的估计时间(以毫秒为单位): 最短 = 0ms,最长 = 0ms,平均 = 0ms Control-C ^C C:\Users\cups>

(一)虚拟机基础环境配置 (1)Xshell登录 Xshell下载地址: https://www.netsarang.com/zh/xshell/

Xshell登录流程: https://jingyan.baidu.com/article/624e7459032b4334e8ba5a33.html

登录后输出如下所示。

1 2 3 4 5 6 Connecting to 192.168.30.2:22... Connection established. To escape to local shell, press Ctrl+Alt+]. Last login: Wed Sep 16 18:01:42 2020 from 192.168.30.156 [root@vm192168302 ~]#

(2)使虚拟机可以上网

原因:生成的虚拟机(内网)在192.168.30.x网段,无法上网。需要配置一个192.168.1.x网段的的ip地址才能上网。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 # # 切换到网络配置文件所在的路径 cd /etc/sysconfig/network-scripts/ # 备份文件 cp ifcfg-ens160 ifcfg-ens160.bat # 更改文件 # 内容示例如下所示 vim ifcfg-ens160 # 重启虚拟机使更改生效;重启网络服务总是失败(.........) reboot # 等待片刻从新连接虚拟机 ping www.baidu.com # # # HWADDR=00:50:56:B8:D7:DC NAME=ens160 GATEWAY=192.168.1.1 # 网关需要改为192.168.1.1 DNS1=8.8.8.8 DNS2=114.114.114.114 DEVICE=ens160 ONBOOT=yes USERCTL=no BOOTPROTO=static NETMASK=255.255.255.0 IPADDR=192.168.30.4 PEERDNS=no # 注销结束的标志 # NM: check_link_down # NM: return 1; # NM: } # 添加新的IP地址192.168.1.x TYPE=Ethernet PROXY_METHOD=none BROWSER_ONLY=no PREFIX=24 IPADDR1=192.168.1.125 PREFIX1=24 NETMASK1=255.255.255.0 DEFROUTE=yes IPV4_FAILURE_FATAL=no IPV6INIT=no #

(3)防火墙 关闭防火墙

1 2 3 systemctl stop firewalld systemctl status firewalld systemctl disable firewalld

注:

1)设置防火墙

firewall-cmd --zone=public --permanent --add-port=5984/tcp --add-port=7050/tcp --add-port=7051/tcp --add-port=7052/tcp --add-port=7053/tcp --add-port=7054/tcp --add-port=8053/tcp --add-port=9053/tcp --add-port=10053/tcp

1 2 3 [root@vm192168302 ~]# firewall-cmd --zone=public --permanent --add-port=5984/tcp --add-port=7050/tcp --add-port=7051/tcp --add-port=7052/tcp --add-port=7053/tcp --add-port=7054/tcp --add-port=8053/tcp --add-port=9053/tcp --add-port=10053/tcp success [root@vm192168302 ~]#

2)重新载入

firewall-cmd --reload

1 2 3 > [root@vm192168302 ~] success [root@vm192168302 ~]#

3)查看开放的端口

firewall-cmd --zone=public --list-ports

1 2 3 > [root@vm192168302 ~] 5984/tcp 7050/tcp 7051/tcp 7052/tcp 7053/tcp 7054/tcp 8053/tcp 9053/tcp 10053/tcp [root@vm192168302 ~]#

(4)设置Selinux

SELinux(Security-Enhanced Linux) 是美国国家安全局(NSA)对于强制访问控制的实现,是 Linux历史上最杰出的新安全子系统。NSA是在Linux社区的帮助下开发了一种访问控制体系,在这种访问控制体系的限制下,进程只能访问那些在他的任务中所需要文件。SELinux 默认安装在 Fedora 和 Red Hat Enterprise Linux 上,也可以作为其他发行版上容易安装的包得到。

博客上都这么做,暂时不清楚为啥做。理解上就是关闭了这样个安全子系统。

1 2 3 4 5 6 7 8 9 # 通过命令获取状态 getenforce # 命令行设置selinux,enforcing,permissive 或者 1,0。 etenforce 0 # 查看selinux的信息 sestatus -v # 编辑配置 vi /etc/selinux/config # 将配置中的 SELINUX=enforcing 改为 SELINUX=disabled

(5)设置时间同步

进行时间同步

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 # 移除本地时间 $ rm -rf /etc/localtime # 修改时区 $ ln -s /usr/share/zoneinfo/Asia/Shanghai /etc/localtime # 设置系统时钟 $ echo "ZONE=" Asia/Shanghai" UTC=false ARC=false">>/etc/sysconfig/clock # 安装时间服务 $ yum install -y ntp $ systemctl start ntpd $ systemctl enable ntpd # 时间同步指向阿里云时间服务器 $ echo "/usr/sbin/ntpdate ntp1.aliyun.com > /dev/null 2>&1; /sbin/hwclock -w" >>/etc/rc.d/rc.local # 定时任务,每分钟同步一次 $ echo "0 */1 * * * ntpdate ntp1.aliyun.com > /dev/null 2>&1; /sbin/hwclock -w" >>/etc/cro

(6)安装常用工具 1 2 3 4 5 6 yum install -y curl \ wget \ tree \ lrzsz \ dos2unix \ git

(7)更换软件源

提升相关软件包下载速度

1 2 3 4 5 6 7 8 9 10 11 12 13 # 使用网易的镜像源 # 源存储目录 cd /etc/yum.repos.d/ # 更改名称进行备份 mv CentOS-Base.repo CentOS-Base.repo.ori # 下载文件 wget http://mirrors.163.com/.help/CentOS6-Base-163.repo # 更改名称 mv CentOS6-Base-163.repo CentOS-Base.repo # 清空缓存 yum -y clean all # 更新 yum makecache

(8)更改hosts文件

默认域名IP的对应关系。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 vim /etc/hosts 192.168.30.2 orderer0.example.com 192.168.30.2 kafka0 192.168.30.2 zookeeper0 192.168.30.3 orderer1.example.com 192.168.30.3 kafka1 192.168.30.3 zookeeper1 192.168.30.4 orderer2.example.com 192.168.30.4 kafka2 192.168.30.4 zookeeper2 192.168.30.5 kafka3 192.168.30.6 peer0.org1.example.com 192.168.30.7 peer1.org1.example.com 192.168.30.8 peer0.org2.example.com 192.168.30.9 peer1.org2.example.com

(二)Fabric基础环境配置

我们做了一个基础环境包,按照基础环境包进行讲解。

基础环境包目录如下:

[root@vm192168302 fabric-tools]# tree -L 2

每个子目录下的readme.txt文件中都写入有:

(1)该文件夹下文件的下载路径。

(2)在该文件夹下需要进行的操作。

注:system中的配置在(一)虚拟机基础环境配置中都介绍,不再赘述。

这个材料包通过Xftp传输到虚拟机虚拟机的/root目录下。

Xftp下载地址: https://www.netsarang.com/zh/xshell/

Xftp登录流程: https://jingyan.baidu.com/article/19020a0a64d941529c284279.html

(1)go

Fabric代码是go写的,需要go环境才能运行。

GO安装包下载路径:https://studygolang.com/dl/golang/go1.11.linux-amd64.tar.gz

命令行切换到go目录下:cd /root/fabric-tools/go

按readme.txt中的提示进行操作即可。readme.txt内容如下:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 https://studygolang.com/dl/golang/go1.11.linux-amd64.tar.gz // 解压到安装包到/usr/local tar -C /usr/local -xzf go1.13.linux-amd64.tar.gz // 打开/etc/profile sudo vim /etc/profile // 添加下列内容 export PATH=$PATH:/usr/local/go/bin export GOROOT=/usr/local/go export GOPATH=$HOME/go export PATH=$PATH:$HOME/go/bin // 更新/etc/profile source /etc/profile // 查看go版本 go version

(2)docker-ce

安装社区版dokcer。

docker-ce安装包下载路径:https://download.docker.com/linux/centos/7/x86_64/stable/Packages/

命令行切换到docker-ce目录下:cd /root/fabric-tools/docker-ce

按readme.txt中的提示进行操作即可。readme.txt内容如下:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 https://download.docker.com/linux/centos/7/x86_64/stable/Packages/ # # # # 清理旧版本 yum remove docker \ docker-client \ docker-client-latest \ docker-common \ docker-latest \ docker-latest-logrotate \ docker-logrotate \ docker-selinux \ docker-engine-selinux \ docker-engine rm -rf /var/lib/docker/ # 安装相关依赖 yum install -y yum-utils device-mapper-persistent-data lvm2 # 指定镜像为阿里云 yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo # 安装docker-ce yum install -y docker-ce # 启动docker-ce systemctl start docker # 查看docker版本 docker --version # 设置开机启动 chkconfig docker on # # # # 清理旧版本 yum remove docker \ docker-client \ docker-client-latest \ docker-common \ docker-latest \ docker-latest-logrotate \ docker-logrotate \ docker-selinux \ docker-engine-selinux \ docker-engine rm -rf /var/lib/docker/ # 强制组成docker的相关软件 rpm -ivh containerd.io-1.2.6-3.3.el7.x86_64.rpm --force --nodeps rpm -ivh container-selinux-2.119.2-1.911c772.el7_8.noarch.rpm --force --nodeps rpm -ivh docker-ce-19.03.9-3.el7.x86_64.rpm --force --nodeps rpm -ivh docker-ce-cli-19.03.9-3.el7.x86_64.rpm --force --nodeps # 启动docker-ce systemctl start docker # 查看docker版本 docker --version # 设置开机启动 chkconfig docker on #

(3)docker-compose

docker镜像管理工具。

docker-compose安装包下载路径:https://github.com/docker/compose/releases

命令行切换到docker-compose目录下:cd /root/fabric-tools/docker-compose

按readme.txt中的提示进行操作即可。readme.txt内容如下:

1 2 3 4 5 6 7 8 https://github.com/docker/compose/releases // 赋予docker-compose可执行 chmod 777 docker-compose-Linux-x86_64 // 复制到/usr/local/bin sudo cp docker-compose-Linux-x86_64 /usr/local/bin/docker-compose // 查看版本 docker-compose -v

(4)bin

Fabric可执行二进制文件。

命令行切换到bin目录下:cd /root/fabric-tools/bin

按readme.txt中的提示进行操作即可。readme.txt内容如下:

1 2 3 4 5 6 # 设置当前目录下文件可读可写可执行 chmod 777 -R ./* # 移动到/usr/local /bin cp ./* /usr/local/bin # 查看二进制文件版本 peer version

(5)images

Fabric镜像。

fabric-tools是本地客户端镜像,主要用来执行peer节点中的相关操作,如频道、智能合约等。

fabric-peer是fabric中网络节点的镜像。peer节点会对客户端提交的数据进行提交前的验证和背书。

fabric-orderer是fabric中网络节点的镜像。orderer节点会对客户单提交的数据进行校验、打包和分发。

fabric-ccenv是chaincode的运行环境。

fabric-baseos是chaincode编译环境里的一个os的基础 。

fabric-couchdb是第三方可插拔式数据库镜像。

fabric-kafka是供分布式基于发布/订阅的消息系统的镜像。

fabric-zookeeper提供高可用、高性能且一致的开源协调服务 。

注:1.4.0拉取的镜像中不包含fabric-ca。fabric-ca是服务器本地CA Server,可以执行fabric-ca-client中的相关操作,实现登录、注册以及注销等操作。在非自定义CA服务的情况下,可以不下载该镜像。

docker镜像下载地址:https://hub.docker.com/t/hyperledger

命令行切换到images目录下:cd /root/fabric-tools/images

按readme.txt中的进行迁移操作即可。readme.txt内容如下:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 # 打包 # # 查看镜像 docker images # 打包镜像 docker save 0a44f4261a55> /home/cups/images/fabric-tools-1.4.0.tar docker save 5b31d55f5f3a> /home/cups/images/fabric-ccenv-1.4.0.tar docker save 54f372205580> /home/cups/images/fabric-orderer-1.4.0.tar docker save 304fac59b501> /home/cups/images/fabric-peer-1.4.0.tar docker save d36da0db87a4> /home/cups/images/fabric-zookeeper-0.4.14.tar docker save a3b095201c66> /home/cups/images/fabric-kafka-0.4.14.tar docker save f14f97292b4c> /home/cups/images/fabric-couchdb-0.4.14.tar docker save 75f5fb1a0e0c> /home/cups/images/fabric-baseos-amd64-0.4.14.tar # 迁移 # # 加载+标记 docker load < fabric-tools-1.4.0.tar docker tag 0a44f4261a55 hyperledger/fabric-tools:latest docker load < fabric-ccenv-1.4.0.tar docker tag 5b31d55f5f3a hyperledger/fabric-ccenv:latest docker load < fabric-orderer-1.4.0.tar docker tag 54f372205580 hyperledger/fabric-orderer:latest docker load < fabric-peer-1.4.0.tar docker tag 304fac59b501 hyperledger/fabric-peer:latest docker load < fabric-zookeeper-0.4.14.tar docker tag d36da0db87a4 hyperledger/fabric-zookeeper:latest docker load < fabric-kafka-0.4.14.tar docker tag a3b095201c66 hyperledger/fabric-kafka:latest docker load < fabric-couchdb-0.4.14.tar docker tag f14f97292b4c hyperledger/fabric-couchdb:latest docker load < fabric-baseos-amd64-0.4.14.tar docker tag 75f5fb1a0e0c hyperledger/fabric-baseos-amd64-0.4.15:latest # 查看镜像 docker images

命令行情况如下。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 [root@vm192168302 images]# docker load < fabric-tools-1.4.0.tar dger/fabric-orderer:latest docker load < fabric-peer-1.4.0.tar docker tag 304fac59b501 hyperledger/fabric-peer:latest docker load < fabric-zookeeper-0.4.14.tar docker tag d36da0db87a4 hyperledger/fabric-zookeeper:latest docker load < fabric-kafka-0.4.14.tar docker tag a3b095201c66 hyperledger/fabric-kafka:latest docker load < fabric-couchdb-0.4.14.tar docker tag f14f97292b4c hyperledger/fabric-couchdb:latest docker load < fabric-baseos-amd64-0.4.14.tar docker tag 75f5fb1a0e0c hyperledger/fabric-baseos-amd64-0.4.15:latest # 查看镜像 docker images 8823818c4748: Loading layer [==================================================>] 119MB/119MB 19d043c86cbc: Loading layer [==================================================>] 15.87kB/15.87kB 883eafdbe580: Loading layer [==================================================>] 14.85kB/14.85kB 4775b2f378bb: Loading layer [==================================================>] 5.632kB/5.632kB 75b79e19929c: Loading layer [==================================================>] 3.072kB/3.072kB 220c7a6e2ac8: Loading layer [==================================================>] 22.53kB/22.53kB d8d6ef4c05aa: Loading layer [==================================================>] 10.35MB/10.35MB 9283de655c18: Loading layer [==================================================>] 22.53kB/22.53kB c4862fd40ae6: Loading layer [==================================================>] 352.7MB/352.7MB a2cc03ff1591: Loading layer [==================================================>] 22.53kB/22.53kB f6adef21933b: Loading layer [==================================================>] 937.7MB/937.7MB 24b718a8e88d: Loading layer [==================================================>] 43.98MB/43.98MB 9417a1383cb1: Loading layer [==================================================>] 127.4MB/127.4MB ad03abc2b5fa: Loading layer [==================================================>] 82.43kB/82.43kB Loaded image ID: sha256:0a44f4261a5517cc74c4028456856deae0bd9954dcdeb0f88e4dc0ffc256d273 [root@vm192168302 images]# docker tag 0a44f4261a55 hyperledger/fabric-tools:latest [root@vm192168302 images]# docker load < fabric-ccenv-1.4.0.tar 299d65a76a92: Loading layer [==================================================>] 23.44MB/23.44MB cc3cde1f12df: Loading layer [==================================================>] 14.86MB/14.86MB 09dc1bd62059: Loading layer [==================================================>] 2.56kB/2.56kB Loaded image ID: sha256:5b31d55f5f3af45eb98d8545f186c582e2b27c0568fa425444f586c4e346a77d [root@vm192168302 images]# docker tag 5b31d55f5f3a hyperledger/fabric-ccenv:latest [root@vm192168302 images]# docker load < fabric-orderer-1.4.0.tar b9eb505a3e8c: Loading layer [==================================================>] 4.096kB/4.096kB 3b9a3ba970d1: Loading layer [==================================================>] 26.16MB/26.16MB 3aa6361a1a0c: Loading layer [==================================================>] 82.43kB/82.43kB Loaded image ID: sha256:54f372205580fca697f9f072f00da38fa5d2d55560003d0e276bcefc99eb64bf [root@vm192168302 images]# docker tag 54f372205580 hyperledger/fabric-orderer:latest [root@vm192168302 images]# docker load < fabric-peer-1.4.0.tar af99ce756b5e: Loading layer [==================================================>] 32.81MB/32.81MB 30b4111c45d9: Loading layer [==================================================>] 82.43kB/82.43kB Loaded image ID: sha256:304fac59b50128710bbcce2554210c3c5355c3c9a3b57d5a8dcaf31c07164f39 [root@vm192168302 images]# docker tag 304fac59b501 hyperledger/fabric-peer:latest [root@vm192168302 images]# docker load < fabric-zookeeper-0.4.14.tar 3ba6f5dc2e67: Loading layer [==================================================>] 144.4kB/144.4kB 48d9f4be826f: Loading layer [==================================================>] 346.6kB/346.6kB ca0d7b871545: Loading layer [==================================================>] 44.78MB/44.78MB fb6342b2909e: Loading layer [==================================================>] 3.072kB/3.072kB Loaded image ID: sha256:d36da0db87a4ee3018918ed30257189f7c1b90b78ab6d16a88eb8eb9dc85052a [root@vm192168302 images]# docker tag d36da0db87a4 hyperledger/fabric-zookeeper:latest [root@vm192168302 images]# docker load < fabric-kafka-0.4.14.tar 3627471ecdd1: Loading layer [==================================================>] 53.9MB/53.9MB 9982c4d512fb: Loading layer [==================================================>] 11.26kB/11.26kB ea2abeced08a: Loading layer [==================================================>] 5.12kB/5.12kB Loaded image ID: sha256:a3b095201c66eaf71bfa099cab415cfdd1a469a2095ef52c10a71643c61467bc [root@vm192168302 images]# docker tag a3b095201c66 hyperledger/fabric-kafka:latest [root@vm192168302 images]# docker load < fabric-couchdb-0.4.14.tar 376b0dd2dfaf: Loading layer [==================================================>] 1.96MB/1.96MB 4165b7ca4b1c: Loading layer [==================================================>] 71.8MB/71.8MB 82ff0855cf12: Loading layer [==================================================>] 3.292MB/3.292MB 7e79668eef46: Loading layer [==================================================>] 578kB/578kB 6b66e47ff200: Loading layer [==================================================>] 32.16MB/32.16MB b589d3869b08: Loading layer [==================================================>] 4.096kB/4.096kB e5b264a8235d: Loading layer [==================================================>] 4.096kB/4.096kB 216933f60c90: Loading layer [==================================================>] 5.632kB/5.632kB b831ca5cf866: Loading layer [==================================================>] 4.096kB/4.096kB 0d46a58941eb: Loading layer [==================================================>] 43.01kB/43.01kB Loaded image ID: sha256:f14f97292b4c960c5294037898352db8ac9c46f87c5bc812bd0ffc0838f15546 [root@vm192168302 images]# docker tag f14f97292b4c hyperledger/fabric-couchdb:latest [root@vm192168302 images]# docker load < fabric-baseos-amd64-0.4.14.tar Loaded image ID: sha256:75f5fb1a0e0c2676b1a9e5572cf7d9c4122452d30f910a27ba69dde9912b2d20 [root@vm192168302 images]# docker tag 75f5fb1a0e0c hyperledger/fabric-baseos-amd64-0.4.15:latest [root@vm192168302 images]# # 查看镜像 [root@vm192168302 images]# docker images REPOSITORY TAG IMAGE ID CREATED SIZE hyperledger/fabric-tools latest 0a44f4261a55 20 months ago 1.56GB hyperledger/fabric-ccenv latest 5b31d55f5f3a 20 months ago 1.43GB hyperledger/fabric-orderer latest 54f372205580 20 months ago 150MB hyperledger/fabric-peer latest 304fac59b501 20 months ago 157MB hyperledger/fabric-zookeeper latest d36da0db87a4 23 months ago 1.43GB hyperledger/fabric-kafka latest a3b095201c66 23 months ago 1.44GB hyperledger/fabric-couchdb latest f14f97292b4c 23 months ago 1.5GB hyperledger/fabric-baseos-amd64-0.4.15 latest 75f5fb1a0e0c 23 months ago 124MB [root@vm192168302 images]#

(6)fabric-samples

fabric示例代码,其中的first-network非常适合于测试Fabric基础环境是否配置完整。

下载地址: https://github.com/hyperledger/fabric-samples

命令行切换到fabric-samples目录下:cd /root/fabric-tools/fabric-samples

进入first-network:cd first-network

为目录下文件赋予可读可写可执行权限:chmod 777 -R ./*

执行./byfn.sh启动示例网络:./byfn.sh up

成功出现END表示Fabric基础环境没有问题。

执行./byfn关闭示例网络:./byfn.sh down

(7)nodejs*

nodejs是fabric-node-sdk的运行环境。fabric-node-sdk支持的nodejs版本为8.x.x到10.x.x。

该软件看情况而定,不一定需要装。

nodejs安装包下载路径:https://nodejs.org/dist/v10.15.0/

命令行切换到nodejs目录下:cd /root/fabric-tools/nodejs

按readme.txt中的提示进行操作即可。readme.txt内容如下:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 // 解压 tar -C /usr/local -xzf node-v10.15.0-linux-x64.tar.gz // 重命名 cd /usr/local mv node-v10.15.0-linux-x64.tar.gz nodejs // 回到根目录 cd ~ // 打开环境变量配置文件 sudo vim /ect/profile // 写入 export PATH=$PATH:/usr/local/nodejs/bin // 更新 source /etc/profile // 查看版本 node -v

Fabric相关操作 (一)准备相关文件 (1)crypto-config.yaml

供cryptogen模块解释执行生成相关证书。

这些证书一部分用以网络节点的启动,另一部分用作客户端证书。

更多内容请参考: https://hyperledgercn.github.io/hyperledgerDocs/

建立文件夹kafka用于存储相关文件:mkdir kafka

进入生成的文件夹:cd kafka

新建crypto-config.yaml并写入如下内容:vim crypto-config.yaml

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 OrdererOrgs: - Name: Orderer Domain: example.com CA: Country: CN Province: HeNan Locality: ZhengZhou Specs: - Hostname: orderer0 - Hostname: orderer1 - Hostname: orderer2 PeerOrgs: - Name: Org1 Domain: org1.example.com EnableNodeOUs: true CA: Country: CN Province: HeNan Locality: ZhengZhou Template: Count: 2 Users: Count: 1 - Name: Org2 Domain: org2.example.com EnableNodeOUs: true CA: Country: CN Province: HeNan Locality: ZhengZhou Template: Count: 2 Users: Count: 1

(2)configtx.yaml

configtx.yaml是Hyperledger Fabric区块链网络运维工具configtxgen用于生成通道创世块或通道交易的配置文件,configtx.yaml的内容直接决定了所生成的创世区块的内容。

更多内容请参考: https://segmentfault.com/a/1190000018990043?utm_source=tag-newest

新建configtx.yaml 并写入如下内容:vim configtx.yaml

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 Organizations: - &OrdererOrg Name: OrdererOrg ID: OrdererMSP MSPDir: crypto-config/ordererOrganizations/example.com/msp Policies: Readers: Type: Signature Rule: "OR('OrdererMSP.member')" Writers: Type: Signature Rule: "OR('OrdererMSP.member')" Admins: Type: Signature Rule: "OR('OrdererMSP.admin')" - &Org1 Name: Org1MSP ID: Org1MSP MSPDir: crypto-config/peerOrganizations/org1.example.com/msp Policies: Readers: Type: Signature Rule: "OR('Org1MSP.admin', 'Org1MSP.peer', 'Org1MSP.client')" Writers: Type: Signature Rule: "OR('Org1MSP.admin', 'Org1MSP.client')" Admins: Type: Signature Rule: "OR('Org1MSP.admin')" AnchorPeers: - Host: peer0.org1.example.com Port: 7051 - &Org2 Name: Org2MSP ID: Org2MSP MSPDir: crypto-config/peerOrganizations/org2.example.com/msp Policies: Readers: Type: Signature Rule: "OR('Org2MSP.admin', 'Org2MSP.peer', 'Org2MSP.client')" Writers: Type: Signature Rule: "OR('Org2MSP.admin', 'Org2MSP.client')" Admins: Type: Signature Rule: "OR('Org2MSP.admin')" AnchorPeers: - Host: peer0.org2.example.com Port: 7051 Capabilities: Global: &ChannelCapabilities V1_1: true Orderer: &OrdererCapabilities V1_1: true Application: &ApplicationCapabilities V1_2: true Application: &ApplicationDefaults Organizations: Policies: Readers: Type: ImplicitMeta Rule: "ANY Readers" Writers: Type: ImplicitMeta Rule: "ANY Writers" Admins: Type: ImplicitMeta Rule: "MAJORITY Admins" Capabilities: <<: *ApplicationCapabilities Orderer: &OrdererDefaults # OrdererType: kafka Addresses: - orderer0.example.com:7050 - orderer1.example.com:7050 - orderer2.example.com:7050 BatchTimeout: 2s BatchSize: MaxMessageCount: 10 AbsoluteMaxBytes: 98 MB PreferredMaxBytes: 512 KB Kafka: Brokers: - kafka0:9092 - kafka1:9092 - kafka2:9092 - kafka3:9092 ######################################################## Organizations: Policies: Readers: Type: ImplicitMeta Rule: "ANY Readers" Writers: Type: ImplicitMeta Rule: "ANY Writers" Admins: Type: ImplicitMeta Rule: "MAJORITY Admins" BlockValidation: Type: ImplicitMeta Rule: "ANY Writers" Capabilities: <<: *OrdererCapabilities Channel: &ChannelDefaults Policies: Readers: Type: ImplicitMeta Rule: "ANY Readers" Writers: Type: ImplicitMeta Rule: "ANY Writers" Admins: Type: ImplicitMeta Rule: "MAJORITY Admins" Capabilities: <<: *ChannelCapabilities Profiles: TwoOrgsOrdererGenesis: <<: *ChannelDefaults Orderer: <<: *OrdererDefaults Organizations: - *OrdererOrg Consortiums: SampleConsortium: Organizations: - *Org1 - *Org2 TwoOrgsChannel: Consortium: SampleConsortium Application: <<: *ApplicationDefaults Organizations: - *Org1 - *Org2

(3)生成公私钥和证书

生成公私钥以及证书。

1 2 3 4 5 6 7 8 9 10 11 12 13 [root@vm192168302 kafka]# cryptogen generate --config=./crypto-config.yaml org1.example.com org2.example.com [root@vm192168302 kafka]# tree -L 2 . ├── configtx.yaml ├── crypto-config │ ├── ordererOrganizations │ └── peerOrganizations └── crypto-config.yaml 3 directories, 2 files [root@vm192168302 kafka]#

(4)生成创世区块

创世区块中包含有网络信息。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 [root@vm192168302 kafka]# mkdir channel-artifacts [root@vm192168302 kafka]# ls channel-artifacts configtx.yaml crypto-config crypto-config.yaml [root@vm192168302 kafka]# configtxgen -profile TwoOrgsOrdererGenesis -outputBlock ./channel-artifacts/genesis.block 2020-09-17 09:39:55.312 CST [common.tools.configtxgen] main -> WARN 001 Omitting the channel ID for configtxgen for output operations is deprecated. Explicitly passing the channel ID will be required in the future, defaulting to 'testchainid'. 2020-09-17 09:39:55.312 CST [common.tools.configtxgen] main -> INFO 002 Loading configuration 2020-09-17 09:39:55.322 CST [common.tools.configtxgen.localconfig] completeInitialization -> INFO 003 orderer type: kafka 2020-09-17 09:39:55.322 CST [common.tools.configtxgen.localconfig] Load -> INFO 004 Loaded configuration: /root/kafka/configtx.yaml 2020-09-17 09:39:55.331 CST [common.tools.configtxgen.localconfig] completeInitialization -> INFO 005 orderer type: kafka 2020-09-17 09:39:55.331 CST [common.tools.configtxgen.localconfig] LoadTopLevel -> INFO 006 Loaded configuration: /root/kafka/configtx.yaml 2020-09-17 09:39:55.333 CST [common.tools.configtxgen] doOutputBlock -> INFO 007 Generating genesis block 2020-09-17 09:39:55.333 CST [common.tools.configtxgen] doOutputBlock -> INFO 008 Writing genesis block [root@vm192168302 kafka]# tree -L 2 . ├── channel-artifacts │ └── genesis.block ├── configtx.yaml ├── crypto-config │ ├── ordererOrganizations │ └── peerOrganizations └── crypto-config.yaml 4 directories, 3 files [root@vm192168302 kafka]#

(5)生成通道配置区块

通道文件。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 [root@vm192168302 kafka]# configtxgen -profile TwoOrgsChannel -outputCreateChannelTx ./channel-artifacts/mychannel.tx -channelID mychannel 2020-09-17 09:45:52.982 CST [common.tools.configtxgen] main -> INFO 001 Loading configuration 2020-09-17 09:45:52.994 CST [common.tools.configtxgen.localconfig] Load -> INFO 002 Loaded configuration: /root/kafka/configtx.yaml 2020-09-17 09:45:53.004 CST [common.tools.configtxgen.localconfig] completeInitialization -> INFO 003 orderer type: kafka 2020-09-17 09:45:53.004 CST [common.tools.configtxgen.localconfig] LoadTopLevel -> INFO 004 Loaded configuration: /root/kafka/configtx.yaml 2020-09-17 09:45:53.004 CST [common.tools.configtxgen] doOutputChannelCreateTx -> INFO 005 Generating new channel configtx 2020-09-17 09:45:53.004 CST [common.tools.configtxgen.encoder] NewChannelGroup -> WARN 006 Default policy emission is deprecated, please include policy specifications for the channel group in configtx.yaml 2020-09-17 09:45:53.005 CST [common.tools.configtxgen.encoder] NewChannelGroup -> WARN 007 Default policy emission is deprecated, please include policy specifications for the channel group in configtx.yaml 2020-09-17 09:45:53.006 CST [common.tools.configtxgen] doOutputChannelCreateTx -> INFO 008 Writing new channel tx [root@vm192168302 kafka]# tree -L 2 . ├── channel-artifacts │ ├── genesis.block │ └── mychannel.tx ├── configtx.yaml ├── crypto-config │ ├── ordererOrganizations │ └── peerOrganizations └── crypto-config.yaml 4 directories, 4 files [root@vm192168302 kafka]#

(6)智能合约

在通道内安装的智能合约。

以chaincode_example02.go为例。

在kafka目录下新建chaincode文件夹,并把chaincode_example02移入。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 [root@vm192168305 kafka]# cd .. [root@vm192168305 ~]# cd fabric-tools/ [root@vm192168305 fabric-tools]# cd fabric-samples/ [root@vm192168305 fabric-samples]# cd chaincode [root@vm192168305 chaincode]# ls abac chaincode_example02 fabcar marbles02 marbles02_private sacc [root@vm192168305 chaincode]# cd chaincode_example02/ [root@vm192168305 chaincode_example02]# ls go java node [root@vm192168305 chaincode_example02]# cd go/ [root@vm192168305 go]# ls chaincode_example02.go [root@vm192168305 go]# mkdir /root/kafka/chaincode/chaincode_example02/ [root@vm192168305 go]# ls chaincode_example02.go [root@vm192168305 go]# cp ./* /root/kafka/chaincode/chaincode_example02/ [root@vm192168305 go]# cd /root/kafka/chaincode [root@vm192168305 chaincode]# ls chaincode_example02 [root@vm192168305 chaincode]# cd chaincode_example02/ [root@vm192168305 chaincode_example02]# ls chaincode_example02.go [root@vm192168305 chaincode_example02]#

智能合约代码如下:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 package main import ( "fmt" "strconv" "github.com/hyperledger/fabric/core/chaincode/shim" pb "github.com/hyperledger/fabric/protos/peer" ) // SimpleChaincode example simple Chaincode implementation type SimpleChaincode struct { } func (t *SimpleChaincode) Init(stub shim.ChaincodeStubInterface) pb.Response { fmt.Println("ex02 Init") _, args := stub.GetFunctionAndParameters() var A, B string // Entities var Aval, Bval int // Asset holdings var err error if len(args) != 4 { return shim.Error("Incorrect number of arguments. Expecting 4") } // Initialize the chaincode A = args[0] Aval, err = strconv.Atoi(args[1]) if err != nil { return shim.Error("Expecting integer value for asset holding") } B = args[2] Bval, err = strconv.Atoi(args[3]) if err != nil { return shim.Error("Expecting integer value for asset holding") } fmt.Printf("Aval = %d, Bval = %d\n", Aval, Bval) // Write the state to the ledger err = stub.PutState(A, []byte(strconv.Itoa(Aval))) if err != nil { return shim.Error(err.Error()) } err = stub.PutState(B, []byte(strconv.Itoa(Bval))) if err != nil { return shim.Error(err.Error()) } return shim.Success(nil) } func (t *SimpleChaincode) Invoke(stub shim.ChaincodeStubInterface) pb.Response { fmt.Println("ex02 Invoke") function, args := stub.GetFunctionAndParameters() if function == "invoke" { // Make payment of X units from A to B return t.invoke(stub, args) } else if function == "delete" { // Deletes an entity from its state return t.delete(stub, args) } else if function == "query" { // the old "Query" is now implemtned in invoke return t.query(stub, args) } return shim.Error("Invalid invoke function name. Expecting \"invoke\" \"delete\" \"query\"") } // Transaction makes payment of X units from A to B func (t *SimpleChaincode) invoke(stub shim.ChaincodeStubInterface, args []string) pb.Response { var A, B string // Entities var Aval, Bval int // Asset holdings var X int // Transaction value var err error if len(args) != 3 { return shim.Error("Incorrect number of arguments. Expecting 3") } A = args[0] B = args[1] // Get the state from the ledger // TODO: will be nice to have a GetAllState call to ledger Avalbytes, err := stub.GetState(A) if err != nil { return shim.Error("Failed to get state") } if Avalbytes == nil { return shim.Error("Entity not found") } Aval, _ = strconv.Atoi(string(Avalbytes)) Bvalbytes, err := stub.GetState(B) if err != nil { return shim.Error("Failed to get state") } if Bvalbytes == nil { return shim.Error("Entity not found") } Bval, _ = strconv.Atoi(string(Bvalbytes)) // Perform the execution X, err = strconv.Atoi(args[2]) if err != nil { return shim.Error("Invalid transaction amount, expecting a integer value") } Aval = Aval - X Bval = Bval + X fmt.Printf("Aval = %d, Bval = %d\n", Aval, Bval) // Write the state back to the ledger err = stub.PutState(A, []byte(strconv.Itoa(Aval))) if err != nil { return shim.Error(err.Error()) } err = stub.PutState(B, []byte(strconv.Itoa(Bval))) if err != nil { return shim.Error(err.Error()) } return shim.Success(nil) } // Deletes an entity from state func (t *SimpleChaincode) delete(stub shim.ChaincodeStubInterface, args []string) pb.Response { if len(args) != 1 { return shim.Error("Incorrect number of arguments. Expecting 1") } A := args[0] // Delete the key from the state in ledger err := stub.DelState(A) if err != nil { return shim.Error("Failed to delete state") } return shim.Success(nil) } // query callback representing the query of a chaincode func (t *SimpleChaincode) query(stub shim.ChaincodeStubInterface, args []string) pb.Response { var A string // Entities var err error if len(args) != 1 { return shim.Error("Incorrect number of arguments. Expecting name of the person to query") } A = args[0] // Get the state from the ledger Avalbytes, err := stub.GetState(A) if err != nil { jsonResp := "{\"Error\":\"Failed to get state for " + A + "\"}" return shim.Error(jsonResp) } if Avalbytes == nil { jsonResp := "{\"Error\":\"Nil amount for " + A + "\"}" return shim.Error(jsonResp) } jsonResp := "{\"Name\":\"" + A + "\",\"Amount\":\"" + string(Avalbytes) + "\"}" fmt.Printf("Query Response:%s\n", jsonResp) return shim.Success(Avalbytes) } func main() { err := shim.Start(new(SimpleChaincode)) if err != nil { fmt.Printf("Error starting Simple chaincode: %s", err) } }

(7)docker-compose-zookeeper.yaml

启动zookeeper的配置文件。

docker-compose-zookeeper0.yaml>>>>>>>>>>>>192.168.30.2

docker-compose-zookeeper1.yaml>>>>>>>>>>>>192.168.30.3

docker-compose-zookeeper2.yaml>>>>>>>>>>>>192.168.30.4

docker-compose-zookeeper0.yaml内容如下:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 version: '2' services: zookeeper0: container_name: zookeeper0 hostname: zookeeper0 image: hyperledger/fabric-zookeeper restart: always environment: - ZOO_MY_ID=1 - ZOO_SERVERS=server.1=zookeeper0:2888:3888 server.2=zookeeper1:2888:3888 server.3=zookeeper2:2888:3888 ports: - 2181:2181 - 2888:2888 - 3888:3888 extra_hosts: - "zookeeper0:192.168.30.2" - "zookeeper1:192.168.30.3" - "zookeeper2:192.168.30.4" - "kafka0:192.168.30.2" - "kafka1:192.168.30.3" - "kafka2:192.168.30.4" - "kafka3:192.168.30.5"

docker-compose-zookeeper1.yaml内容如下:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 version: '2' services: zookeeper1: container_name: zookeeper1 hostname: zookeeper1 image: hyperledger/fabric-zookeeper restart: always environment: - ZOO_MY_ID=2 - ZOO_SERVERS=server.1=zookeeper0:2888:3888 server.2=zookeeper1:2888:3888 server.3=zookeeper2:2888:3888 ports: - 2181:2181 - 2888:2888 - 3888:3888 extra_hosts: - "zookeeper0:192.168.30.2" - "zookeeper1:192.168.30.3" - "zookeeper2:192.168.30.4" - "kafka0:192.168.30.2" - "kafka1:192.168.30.3" - "kafka2:192.168.30.4" - "kafka3:192.168.30.5"

docker-compose-zookeeper2.yaml内容如下:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 version: '2' services: zookeeper2: container_name: zookeeper2 hostname: zookeeper2 image: hyperledger/fabric-zookeeper restart: always environment: - ZOO_MY_ID=3 - ZOO_SERVERS=server.1=zookeeper0:2888:3888 server.2=zookeeper1:2888:3888 server.3=zookeeper2:2888:3888 ports: - 2181:2181 - 2888:2888 - 3888:3888 extra_hosts: - "zookeeper0:192.168.30.2" - "zookeeper1:192.168.30.3" - "zookeeper2:192.168.30.4" - "kafka0:192.168.30.2" - "kafka1:192.168.30.3" - "kafka2:192.168.30.4" - "kafka3:192.168.30.5"

(8)docker-compose-kafka.yaml

启动kafka的配置文件。

docker-compose-kafka0.yaml >>>>>>>>>>>>192.168.30.2

docker-compose-kafka1.yaml >>>>>>>>>>>>192.168.30.3

docker-compose-kafka2.yaml >>>>>>>>>>>>192.168.30.4

docker-compose-kafka3.yaml >>>>>>>>>>>>192.168.30.5

docker-compose-kafka0.yaml内容如下:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 version: '2' services: kafka0: container_name: kafka0 hostname: kafka0 image: hyperledger/fabric-kafka restart: always environment: - KAFKA_MESSAGE_MAX_BYTES=103809024 # 99 * 1024 * 1024 B - KAFKA_REPLICA_FETCH_MAX_BYTES=103809024 # 99 * 1024 * 1024 B - KAFKA_UNCLEAN_LEADER_ELECTION_ENABLE=false environment: - KAFKA_BROKER_ID=0 - KAFKA_MIN_INSYNC_REPLICAS=2 - KAFKA_DEFAULT_REPLICATION_FACTOR=3 - KAFKA_ZOOKEEPER_CONNECT=zookeeper0:2181,zookeeper1:2181,zookeeper2:2181 ports: - 9092:9092 extra_hosts: - "zookeeper0:192.168.30.2" - "zookeeper1:192.168.30.3" - "zookeeper2:192.168.30.4" - "kafka0:192.168.30.2" - "kafka1:192.168.30.3" - "kafka2:192.168.30.4" - "kafka3:192.168.30.5"

docker-compose-kafka1.yaml内容如下:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 version: '2' services: kafka0: container_name: kafka1 hostname: kafka1 image: hyperledger/fabric-kafka restart: always environment: - KAFKA_MESSAGE_MAX_BYTES=103809024 # 99 * 1024 * 1024 B - KAFKA_REPLICA_FETCH_MAX_BYTES=103809024 # 99 * 1024 * 1024 B - KAFKA_UNCLEAN_LEADER_ELECTION_ENABLE=false environment: - KAFKA_BROKER_ID=1 - KAFKA_MIN_INSYNC_REPLICAS=2 - KAFKA_DEFAULT_REPLICATION_FACTOR=3 - KAFKA_ZOOKEEPER_CONNECT=zookeeper0:2181,zookeeper1:2181,zookeeper2:2181 ports: - 9092:9092 extra_hosts: - "zookeeper0:192.168.30.2" - "zookeeper1:192.168.30.3" - "zookeeper2:192.168.30.4" - "kafka0:192.168.30.2" - "kafka1:192.168.30.3" - "kafka2:192.168.30.4" - "kafka3:192.168.30.5"

docker-compose-kafka2.yaml内容如下:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 version: '2' services: kafka0: container_name: kafka2 hostname: kafka2 image: hyperledger/fabric-kafka restart: always environment: - KAFKA_MESSAGE_MAX_BYTES=103809024 # 99 * 1024 * 1024 B - KAFKA_REPLICA_FETCH_MAX_BYTES=103809024 # 99 * 1024 * 1024 B - KAFKA_UNCLEAN_LEADER_ELECTION_ENABLE=false environment: - KAFKA_BROKER_ID=2 - KAFKA_MIN_INSYNC_REPLICAS=2 - KAFKA_DEFAULT_REPLICATION_FACTOR=3 - KAFKA_ZOOKEEPER_CONNECT=zookeeper0:2181,zookeeper1:2181,zookeeper2:2181 ports: - 9092:9092 extra_hosts: - "zookeeper0:192.168.30.2" - "zookeeper1:192.168.30.3" - "zookeeper2:192.168.30.4" - "kafka0:192.168.30.2" - "kafka1:192.168.30.3" - "kafka2:192.168.30.4" - "kafka3:192.168.30.5"

docker-compose-kafka3.yaml内容如下:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 version: '2' services: kafka0: container_name: kafka3 hostname: kafka3 image: hyperledger/fabric-kafka restart: always environment: - KAFKA_MESSAGE_MAX_BYTES=103809024 # 99 * 1024 * 1024 B - KAFKA_REPLICA_FETCH_MAX_BYTES=103809024 # 99 * 1024 * 1024 B - KAFKA_UNCLEAN_LEADER_ELECTION_ENABLE=false environment: - KAFKA_BROKER_ID=3 - KAFKA_MIN_INSYNC_REPLICAS=2 - KAFKA_DEFAULT_REPLICATION_FACTOR=3 - KAFKA_ZOOKEEPER_CONNECT=zookeeper0:2181,zookeeper1:2181,zookeeper2:2181 ports: - 9092:9092 extra_hosts: - "zookeeper0:192.168.30.2" - "zookeeper1:192.168.30.3" - "zookeeper2:192.168.30.4" - "kafka0:192.168.30.2" - "kafka1:192.168.30.3" - "kafka2:192.168.30.4" - "kafka3:192.168.30.5"

(9)docker-compose-orderer.yaml

启动orderer节点的配置文件。

docker-compose-orderer0.yaml >>>>>>>>>>>>192.168.30.2

docker-compose-orderer1.yaml >>>>>>>>>>>>192.168.30.3

docker-compose-orderer2.yaml >>>>>>>>>>>>192.168.30.4

docker-compose-orderer0.yaml 内容如下:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 version: '2' services: orderer0.example.com: container_name: orderer0.example.com image: hyperledger/fabric-orderer environment: - ORDERER_GENERAL_LOGLEVEL=debug - ORDERER_GENERAL_LISTENADDRESS=0.0.0.0 - ORDERER_GENERAL_GENESISMETHOD=file - ORDERER_GENERAL_GENESISFILE=/var/hyperledger/orderer/orderer.genesis.block - ORDERER_GENERAL_LOCALMSPID=OrdererMSP - ORDERER_GENERAL_LOCALMSPDIR=/var/hyperledger/orderer/msp # enabled TLS - ORDERER_GENERAL_TLS_ENABLED=true - ORDERER_GENERAL_TLS_PRIVATEKEY=/var/hyperledger/orderer/tls/server.key - ORDERER_GENERAL_TLS_CERTIFICATE=/var/hyperledger/orderer/tls/server.crt - ORDERER_GENERAL_TLS_ROOTCAS=/var/hyperledger/orderer/tls/ca.crt - ORDERER_KAFKA_RETRY_LONGINTERVAL=10s - ORDERER_KAFKA_RETRY_LONGTOTAL=100s - ORDERER_KAFKA_RETRY_SHORTINTERVAL=1s - ORDERER_KAFKA_RETRY_SHORTTOTAL=30s - ORDERER_KAFKA_VERBOSE=true working_dir: /opt/gopath/src/github.com/hyperledger/fabric command: orderer volumes: - ./channel-artifacts/genesis.block:/var/hyperledger/orderer/orderer.genesis.block - ./crypto-config/ordererOrganizations/example.com/orderers/orderer0.example.com/msp:/var/hyperledger/orderer/msp - ./crypto-config/ordererOrganizations/example.com/orderers/orderer0.example.com/tls/:/var/hyperledger/orderer/tls ports: - 7050:7050 extra_hosts: - "kafka0:192.168.30.2" - "kafka1:192.168.30.3" - "kafka2:192.168.30.4" - "kafka3:192.168.30.5"

docker-compose-orderer1.yaml 内容如下:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 version: '2' services: orderer1.example.com: container_name: orderer1.example.com image: hyperledger/fabric-orderer environment: - ORDERER_GENERAL_LOGLEVEL=debug - ORDERER_GENERAL_LISTENADDRESS=0.0.0.0 - ORDERER_GENERAL_GENESISMETHOD=file - ORDERER_GENERAL_GENESISFILE=/var/hyperledger/orderer/orderer.genesis.block - ORDERER_GENERAL_LOCALMSPID=OrdererMSP - ORDERER_GENERAL_LOCALMSPDIR=/var/hyperledger/orderer/msp # enabled TLS - ORDERER_GENERAL_TLS_ENABLED=true - ORDERER_GENERAL_TLS_PRIVATEKEY=/var/hyperledger/orderer/tls/server.key - ORDERER_GENERAL_TLS_CERTIFICATE=/var/hyperledger/orderer/tls/server.crt - ORDERER_GENERAL_TLS_ROOTCAS=/var/hyperledger/orderer/tls/ca.crt - ORDERER_KAFKA_RETRY_LONGINTERVAL=10s - ORDERER_KAFKA_RETRY_LONGTOTAL=100s - ORDERER_KAFKA_RETRY_SHORTINTERVAL=1s - ORDERER_KAFKA_RETRY_SHORTTOTAL=30s - ORDERER_KAFKA_VERBOSE=true working_dir: /opt/gopath/src/github.com/hyperledger/fabric command: orderer volumes: - ./channel-artifacts/genesis.block:/var/hyperledger/orderer/orderer.genesis.block - ./crypto-config/ordererOrganizations/example.com/orderers/orderer1.example.com/msp:/var/hyperledger/orderer/msp - ./crypto-config/ordererOrganizations/example.com/orderers/orderer1.example.com/tls/:/var/hyperledger/orderer/tls ports: - 7050:7050 extra_hosts: - "kafka0:192.168.30.2" - "kafka1:192.168.30.3" - "kafka2:192.168.30.4" - "kafka3:192.168.30.5"

docker-compose-orderer2.yaml 内容如下:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 version: '2' services: orderer2.example.com: container_name: orderer2.example.com image: hyperledger/fabric-orderer environment: - ORDERER_GENERAL_LOGLEVEL=debug - ORDERER_GENERAL_LISTENADDRESS=0.0.0.0 - ORDERER_GENERAL_GENESISMETHOD=file - ORDERER_GENERAL_GENESISFILE=/var/hyperledger/orderer/orderer.genesis.block - ORDERER_GENERAL_LOCALMSPID=OrdererMSP - ORDERER_GENERAL_LOCALMSPDIR=/var/hyperledger/orderer/msp # enabled TLS - ORDERER_GENERAL_TLS_ENABLED=true - ORDERER_GENERAL_TLS_PRIVATEKEY=/var/hyperledger/orderer/tls/server.key - ORDERER_GENERAL_TLS_CERTIFICATE=/var/hyperledger/orderer/tls/server.crt - ORDERER_GENERAL_TLS_ROOTCAS=/var/hyperledger/orderer/tls/ca.crt - ORDERER_KAFKA_RETRY_LONGINTERVAL=10s - ORDERER_KAFKA_RETRY_LONGTOTAL=100s - ORDERER_KAFKA_RETRY_SHORTINTERVAL=1s - ORDERER_KAFKA_RETRY_SHORTTOTAL=30s - ORDERER_KAFKA_VERBOSE=true working_dir: /opt/gopath/src/github.com/hyperledger/fabric command: orderer volumes: - ./channel-artifacts/genesis.block:/var/hyperledger/orderer/orderer.genesis.block - ./crypto-config/ordererOrganizations/example.com/orderers/orderer2.example.com/msp:/var/hyperledger/orderer/msp - ./crypto-config/ordererOrganizations/example.com/orderers/orderer2.example.com/tls/:/var/hyperledger/orderer/tls ports: - 7050:7050 extra_hosts: - "kafka0:192.168.30.2" - "kafka1:192.168.30.3" - "kafka2:192.168.30.4" - "kafka3:192.168.30.5"

(10)docker-compose-peer.yaml

启动peer节点的配置文件。

docker-compose-peer10.yaml>>>>>>>>>>>>192.168.30.6

docker-compose-peer11.yaml>>>>>>>>>>>>192.168.30.7

docker-compose-peer20.yaml>>>>>>>>>>>>192.168.30.8

docker-compose-peer21.yaml>>>>>>>>>>>>192.168.30.9

docker-compose-peer10.yaml 内容如下:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 version: '2' services: peer0.org1.example.com: container_name: peer0.org1.example.com hostname: peer0.org1.example.com image: hyperledger/fabric-peer environment: - CORE_PEER_ADDRESSAUTODETECT=true - CORE_PEER_ID=peer0.org1.example.com - CORE_PEER_ADDRESS=peer0.org1.example.com:7051 - CORE_PEER_CHAINCODELISTENADDRESS=peer0.org1.example.com:7052 - CORE_PEER_GOSSIP_EXTERNALENDPOINT=peer0.org1.example.com:7051 - CORE_PEER_LOCALMSPID=Org1MSP - CORE_VM_ENDPOINT=unix:///host/var/run/docker.sock - CORE_LOGGING_LEVEL=DEBUG - CORE_PEER_GOSSIP_USELEADERELECTION=true - CORE_PEER_GOSSIP_ORGLEADER=false - CORE_PEER_PROFILE_ENABLED=true - CORE_PEER_TLS_ENABLED=true - CORE_PEER_TLS_CERT_FILE=/etc/hyperledger/fabric/tls/server.crt - CORE_PEER_TLS_KEY_FILE=/etc/hyperledger/fabric/tls/server.key - CORE_PEER_TLS_ROOTCERT_FILE=/etc/hyperledger/fabric/tls/ca.crt working_dir: /opt/gopath/src/github.com/hyperledger/fabric/peer command: peer node start volumes: - /var/run/:/host/var/run/ - ./crypto-config/peerOrganizations/org1.example.com/peers/peer0.org1.example.com/msp:/etc/hyperledger/fabric/msp - ./crypto-config/peerOrganizations/org1.example.com/peers/peer0.org1.example.com/tls:/etc/hyperledger/fabric/tls ports: - 7051:7051 - 7052:7052 - 7053:7053 extra_hosts: - "orderer0.example.com:192.168.30.2" - "orderer1.example.com:192.168.30.3" - "orderer2.example.com:192.168.30.4" cli: container_name: cli image: hyperledger/fabric-tools tty: true environment: - GOPATH=/opt/gopath - CORE_VM_ENDPOINT=unix:///host/var/run/docker.sock - CORE_LOGGING_LEVEL=DEBUG - CORE_PEER_ID=cli - CORE_PEER_ADDRESS=peer0.org1.example.com:7051 - CORE_PEER_LOCALMSPID=Org1MSP - CORE_PEER_TLS_ENABLED=true - CORE_PEER_TLS_CERT_FILE=/opt/gopath/src/github.com/hyperledger/fabric/peer/crypto/peerOrganizations/org1.example.com/peers/peer0.org1.example.com/tls/server.crt - CORE_PEER_TLS_KEY_FILE=/opt/gopath/src/github.com/hyperledger/fabric/peer/crypto/peerOrganizations/org1.example.com/peers/peer0.org1.example.com/tls/server.key - CORE_PEER_TLS_ROOTCERT_FILE=/opt/gopath/src/github.com/hyperledger/fabric/peer/crypto/peerOrganizations/org1.example.com/peers/peer0.org1.example.com/tls/ca.crt - CORE_PEER_MSPCONFIGPATH=/opt/gopath/src/github.com/hyperledger/fabric/peer/crypto/peerOrganizations/org1.example.com/users/Admin@org1.example.com/msp working_dir: /opt/gopath/src/github.com/hyperledger/fabric/peer volumes: - /var/run/:/host/var/run/ - ./chaincode:/opt/gopath/src/github.com/hyperledger/fabric/chaincode - ./crypto-config:/opt/gopath/src/github.com/hyperledger/fabric/peer/crypto/ - ./channel-artifacts:/opt/gopath/src/github.com/hyperledger/fabric/peer/channel-artifacts extra_hosts: - "orderer0.example.com:192.168.30.2" - "orderer1.example.com:192.168.30.3" - "orderer2.example.com:192.168.30.4" - "peer0.org1.example.com:192.168.30.6" - "peer1.org1.example.com:192.168.30.7" - "peer0.org2.example.com:192.168.30.8" - "peer1.org2.example.com:192.168.30.9"

docker-compose-peer11.yaml 内容如下:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 version: '2' services: peer1.org1.example.com: container_name: peer1.org1.example.com hostname: peer1.org1.example.com image: hyperledger/fabric-peer environment: - CORE_PEER_ADDRESSAUTODETECT=true - CORE_PEER_ID=peer1.org1.example.com - CORE_PEER_ADDRESS=peer1.org1.example.com:7051 - CORE_PEER_CHAINCODELISTENADDRESS=peer1.org1.example.com:7052 - CORE_PEER_GOSSIP_EXTERNALENDPOINT=peer1.org1.example.com:7051 - CORE_PEER_LOCALMSPID=Org1MSP - CORE_VM_ENDPOINT=unix:///host/var/run/docker.sock - CORE_LOGGING_LEVEL=DEBUG - CORE_PEER_GOSSIP_USELEADERELECTION=true - CORE_PEER_GOSSIP_ORGLEADER=false - CORE_PEER_PROFILE_ENABLED=true - CORE_PEER_TLS_ENABLED=true - CORE_PEER_TLS_CERT_FILE=/etc/hyperledger/fabric/tls/server.crt - CORE_PEER_TLS_KEY_FILE=/etc/hyperledger/fabric/tls/server.key - CORE_PEER_TLS_ROOTCERT_FILE=/etc/hyperledger/fabric/tls/ca.crt working_dir: /opt/gopath/src/github.com/hyperledger/fabric/peer command: peer node start volumes: - /var/run/:/host/var/run/ - ./crypto-config/peerOrganizations/org1.example.com/peers/peer1.org1.example.com/msp:/etc/hyperledger/fabric/msp - ./crypto-config/peerOrganizations/org1.example.com/peers/peer1.org1.example.com/tls:/etc/hyperledger/fabric/tls ports: - 7051:7051 - 7052:7052 - 7053:7053 extra_hosts: - "orderer0.example.com:192.168.30.2" - "orderer1.example.com:192.168.30.3" - "orderer2.example.com:192.168.30.4" cli: container_name: cli image: hyperledger/fabric-tools tty: true environment: - GOPATH=/opt/gopath - CORE_VM_ENDPOINT=unix:///host/var/run/docker.sock - CORE_LOGGING_LEVEL=DEBUG - CORE_PEER_ID=cli - CORE_PEER_ADDRESS=peer1.org1.example.com:7051 - CORE_PEER_LOCALMSPID=Org1MSP - CORE_PEER_TLS_ENABLED=true - CORE_PEER_TLS_CERT_FILE=/opt/gopath/src/github.com/hyperledger/fabric/peer/crypto/peerOrganizations/org1.example.com/peers/peer1.org1.example.com/tls/server.crt - CORE_PEER_TLS_KEY_FILE=/opt/gopath/src/github.com/hyperledger/fabric/peer/crypto/peerOrganizations/org1.example.com/peers/peer1.org1.example.com/tls/server.key - CORE_PEER_TLS_ROOTCERT_FILE=/opt/gopath/src/github.com/hyperledger/fabric/peer/crypto/peerOrganizations/org1.example.com/peers/peer1.org1.example.com/tls/ca.crt - CORE_PEER_MSPCONFIGPATH=/opt/gopath/src/github.com/hyperledger/fabric/peer/crypto/peerOrganizations/org1.example.com/users/Admin@org1.example.com/msp working_dir: /opt/gopath/src/github.com/hyperledger/fabric/peer volumes: - /var/run/:/host/var/run/ - ./chaincode:/opt/gopath/src/github.com/hyperledger/fabric/chaincode - ./crypto-config:/opt/gopath/src/github.com/hyperledger/fabric/peer/crypto/ - ./channel-artifacts:/opt/gopath/src/github.com/hyperledger/fabric/peer/channel-artifacts extra_hosts: - "orderer0.example.com:192.168.30.2" - "orderer1.example.com:192.168.30.3" - "orderer2.example.com:192.168.30.4" - "peer0.org1.example.com:192.168.30.6" - "peer1.org1.example.com:192.168.30.7" - "peer0.org2.example.com:192.168.30.8" - "peer1.org2.example.com:192.168.30.9"

docker-compose-peer20.yaml 内容如下:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 version: '2' services: peer0.org2.example.com: container_name: peer0.org2.example.com hostname: peer0.org2.example.com image: hyperledger/fabric-peer environment: - CORE_PEER_ADDRESSAUTODETECT=true - CORE_PEER_ID=peer0.org2.example.com - CORE_PEER_ADDRESS=peer0.org2.example.com:7051 - CORE_PEER_CHAINCODELISTENADDRESS=peer0.org2.example.com:7052 - CORE_PEER_GOSSIP_EXTERNALENDPOINT=peer0.org2.example.com:7051 - CORE_PEER_LOCALMSPID=Org2MSP - CORE_VM_ENDPOINT=unix:///host/var/run/docker.sock - CORE_LOGGING_LEVEL=DEBUG - CORE_PEER_GOSSIP_USELEADERELECTION=true - CORE_PEER_GOSSIP_ORGLEADER=false - CORE_PEER_PROFILE_ENABLED=true - CORE_PEER_TLS_ENABLED=true - CORE_PEER_TLS_CERT_FILE=/etc/hyperledger/fabric/tls/server.crt - CORE_PEER_TLS_KEY_FILE=/etc/hyperledger/fabric/tls/server.key - CORE_PEER_TLS_ROOTCERT_FILE=/etc/hyperledger/fabric/tls/ca.crt working_dir: /opt/gopath/src/github.com/hyperledger/fabric/peer command: peer node start volumes: - /var/run/:/host/var/run/ - ./crypto-config/peerOrganizations/org2.example.com/peers/peer0.org2.example.com/msp:/etc/hyperledger/fabric/msp - ./crypto-config/peerOrganizations/org2.example.com/peers/peer0.org2.example.com/tls:/etc/hyperledger/fabric/tls ports: - 7051:7051 - 7052:7052 - 7053:7053 extra_hosts: - "orderer0.example.com:192.168.30.2" - "orderer1.example.com:192.168.30.3" - "orderer2.example.com:192.168.30.4" cli: container_name: cli image: hyperledger/fabric-tools tty: true environment: - GOPATH=/opt/gopath - CORE_VM_ENDPOINT=unix:///host/var/run/docker.sock - CORE_LOGGING_LEVEL=DEBUG - CORE_PEER_ID=cli - CORE_PEER_ADDRESS=peer0.org2.example.com:7051 - CORE_PEER_LOCALMSPID=Org2MSP - CORE_PEER_TLS_ENABLED=true - CORE_PEER_TLS_CERT_FILE=/opt/gopath/src/github.com/hyperledger/fabric/peer/crypto/peerOrganizations/org2.example.com/peers/peer0.org2.example.com/tls/server.crt - CORE_PEER_TLS_KEY_FILE=/opt/gopath/src/github.com/hyperledger/fabric/peer/crypto/peerOrganizations/org2.example.com/peers/peer0.org2.example.com/tls/server.key - CORE_PEER_TLS_ROOTCERT_FILE=/opt/gopath/src/github.com/hyperledger/fabric/peer/crypto/peerOrganizations/org2.example.com/peers/peer0.org2.example.com/tls/ca.crt - CORE_PEER_MSPCONFIGPATH=/opt/gopath/src/github.com/hyperledger/fabric/peer/crypto/peerOrganizations/org2.example.com/users/Admin@org2.example.com/msp working_dir: /opt/gopath/src/github.com/hyperledger/fabric/peer volumes: - /var/run/:/host/var/run/ - ./chaincode:/opt/gopath/src/github.com/hyperledger/fabric/chaincode - ./crypto-config:/opt/gopath/src/github.com/hyperledger/fabric/peer/crypto/ - ./channel-artifacts:/opt/gopath/src/github.com/hyperledger/fabric/peer/channel-artifacts extra_hosts: - "orderer0.example.com:192.168.30.2" - "orderer1.example.com:192.168.30.3" - "orderer2.example.com:192.168.30.4" - "peer0.org1.example.com:192.168.30.6" - "peer1.org1.example.com:192.168.30.7" - "peer0.org2.example.com:192.168.30.8" - "peer1.org2.example.com:192.168.30.9"

docker-compose-peer21.yaml 内容如下:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 version: '2' services: peer1.org2.example.com: container_name: peer1.org2.example.com hostname: peer1.org2.example.com image: hyperledger/fabric-peer environment: - CORE_PEER_ADDRESSAUTODETECT=true - CORE_PEER_ID=peer1.org2.example.com - CORE_PEER_ADDRESS=peer1.org2.example.com:7051 - CORE_PEER_CHAINCODELISTENADDRESS=peer1.org2.example.com:7052 - CORE_PEER_GOSSIP_EXTERNALENDPOINT=peer1.org2.example.com:7051 - CORE_PEER_LOCALMSPID=Org2MSP - CORE_VM_ENDPOINT=unix:///host/var/run/docker.sock - CORE_LOGGING_LEVEL=DEBUG - CORE_PEER_GOSSIP_USELEADERELECTION=true - CORE_PEER_GOSSIP_ORGLEADER=false - CORE_PEER_PROFILE_ENABLED=true - CORE_PEER_TLS_ENABLED=true - CORE_PEER_TLS_CERT_FILE=/etc/hyperledger/fabric/tls/server.crt - CORE_PEER_TLS_KEY_FILE=/etc/hyperledger/fabric/tls/server.key - CORE_PEER_TLS_ROOTCERT_FILE=/etc/hyperledger/fabric/tls/ca.crt working_dir: /opt/gopath/src/github.com/hyperledger/fabric/peer command: peer node start volumes: - /var/run/:/host/var/run/ - ./crypto-config/peerOrganizations/org2.example.com/peers/peer1.org2.example.com/msp:/etc/hyperledger/fabric/msp - ./crypto-config/peerOrganizations/org2.example.com/peers/peer1.org2.example.com/tls:/etc/hyperledger/fabric/tls ports: - 7051:7051 - 7052:7052 - 7053:7053 extra_hosts: - "orderer0.example.com:192.168.30.2" - "orderer1.example.com:192.168.30.3" - "orderer2.example.com:192.168.30.4" cli: container_name: cli image: hyperledger/fabric-tools tty: true environment: - GOPATH=/opt/gopath - CORE_VM_ENDPOINT=unix:///host/var/run/docker.sock - CORE_LOGGING_LEVEL=DEBUG - CORE_PEER_ID=cli - CORE_PEER_ADDRESS=peer1.org2.example.com:7051 - CORE_PEER_LOCALMSPID=Org2MSP - CORE_PEER_TLS_ENABLED=true - CORE_PEER_TLS_CERT_FILE=/opt/gopath/src/github.com/hyperledger/fabric/peer/crypto/peerOrganizations/org2.example.com/peers/peer1.org2.example.com/tls/server.crt - CORE_PEER_TLS_KEY_FILE=/opt/gopath/src/github.com/hyperledger/fabric/peer/crypto/peerOrganizations/org2.example.com/peers/peer1.org2.example.com/tls/server.key - CORE_PEER_TLS_ROOTCERT_FILE=/opt/gopath/src/github.com/hyperledger/fabric/peer/crypto/peerOrganizations/org2.example.com/peers/peer1.org2.example.com/tls/ca.crt - CORE_PEER_MSPCONFIGPATH=/opt/gopath/src/github.com/hyperledger/fabric/peer/crypto/peerOrganizations/org2.example.com/users/Admin@org2.example.com/msp working_dir: /opt/gopath/src/github.com/hyperledger/fabric/peer volumes: - /var/run/:/host/var/run/ - ./chaincode:/opt/gopath/src/github.com/hyperledger/fabric/chaincode - ./crypto-config:/opt/gopath/src/github.com/hyperledger/fabric/peer/crypto/ - ./channel-artifacts:/opt/gopath/src/github.com/hyperledger/fabric/peer/channel-artifacts extra_hosts: - "orderer0.example.com:192.168.30.2" - "orderer1.example.com:192.168.30.3" - "orderer2.example.com:192.168.30.4" - "peer0.org1.example.com:192.168.30.6" - "peer1.org1.example.com:192.168.30.7" - "peer0.org2.example.com:192.168.30.8" - "peer1.org2.example.com:192.168.30.9"

(11)分发文件

按理说应当把除了自己节点用到的私钥保留,其他私钥全部删除再传输。

操作过于繁琐了,且这只是一个简单的小实验。

直接全部复制。

1 2 3 4 cd /root scp -r kafka root@192.168.30.3:/root/ ... scp -r kafka root@192.168.30.9:/root/

(12)文件目录*

最终目录结构如下所示。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 [root@vm192168302 kafka]# tree -L 3 . ├── chaincode │ └── chaincode_example02 │ └── chaincode_example02.go ├── channel-artifacts │ ├── genesis.block │ └── mychannel.tx ├── configtx.yaml ├── crypto-config │ ├── ordererOrganizations │ │ └── example.com │ └── peerOrganizations │ ├── org1.example.com │ └── org2.example.com ├── crypto-config.yaml ├── docker-compose-kafka0.yaml ├── docker-compose-kafka1.yaml ├── docker-compose-kafka2.yaml ├── docker-compose-kafka3.yaml ├── docker-compose-orderer0.yaml ├── docker-compose-orderer1.yaml ├── docker-compose-orderer2.yaml ├── docker-compose-peer10.yaml ├── docker-compose-peer11.yaml ├── docker-compose-peer20.yaml ├── docker-compose-peer21.yaml ├── docker-compose-zookeeper0.yaml ├── docker-compose-zookeeper1.yaml └── docker-compose-zookeeper2.yaml 9 directories, 19 files [root@vm192168302 kafka]#

(二)按顺序依次启动各节点 (1)zookeeper

docker-compose -f docker-compose-zookeeper0.yaml up -d

docker-compose -f docker-compose-zookeeper1.yaml up -d

docker-compose -f docker-compose-zookeeper2.yaml up -d

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 # # # [root@vm192168302 kafka]# docker-compose -f docker-compose-zookeeper0.yaml up -d Creating zookeeper0 ... done [root@vm192168302 kafka]# docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES dceb298110c6 hyperledger/fabric-zookeeper "/docker-entrypoint.…" 4 seconds ago Up 3 seconds 0.0.0.0:2181->2181/tcp, 0.0.0.0:2888->2888/tcp, 0.0.0.0:3888->3888/tcp zookeeper0 [root@vm192168302 kafka]# # # # [root@vm192168302 kafka]# docker-compose -f docker-compose-zookeeper0.yaml up -d Creating zookeeper0 ... done [root@vm192168302 kafka]# docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES dceb298110c6 hyperledger/fabric-zookeeper "/docker-entrypoint.…" 4 seconds ago Up 3 seconds 0.0.0.0:2181->2181/tcp, 0.0.0.0:2888->2888/tcp, 0.0.0.0:3888->3888/tcp zookeeper0 [root@vm192168302 kafka]# # # # [root@vm192168304 kafka]# docker-compose -f docker-compose-zookeeper2.yaml up -d Creating zookeeper2 ... done [root@vm192168304 kafka]# docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 1b2f250a7e99 hyperledger/fabric-zookeeper "/docker-entrypoint.…" 7 minutes ago Up 7 minutes 0.0.0.0:2181->2181/tcp, 0.0.0.0:2888->2888/tcp, 0.0.0.0:3888->3888/tcp zookeeper2 [root@vm192168304 kafka]#

(2)kafka

docker-compose -f docker-compose-kafka0.yaml up -d

docker-compose -f docker-compose-kafka1.yaml up -d

docker-compose -f docker-compose-kafka2.yaml up -d

docker-compose -f docker-compose-kafka3.yaml up -d

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 # # # [root@vm192168302 kafka]# docker-compose -f docker-compose-kafka0.yaml up -d WARNING: Found orphan containers (zookeeper0) for this project. If you removed or renamed this service in your compose file, you can run this command with the --remove-orphans flag to clean it up. Creating kafka0 ... done [root@vm192168302 kafka]# docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES df819f7b3a4d hyperledger/fabric-kafka "/docker-entrypoint.…" 8 seconds ago Up 8 seconds 0.0.0.0:9092->9092/tcp, 9093/tcp kafka0 dceb298110c6 hyperledger/fabric-zookeeper "/docker-entrypoint.…" About a minute ago Up About a minute 0.0.0.0:2181->2181/tcp, 0.0.0.0:2888->2888/tcp, 0.0.0.0:3888->3888/tcp zookeeper0 [root@vm192168302 kafka]# # # # [root@vm192168303 kafka]# docker-compose -f docker-compose-kafka1.yaml up -d WARNING: Found orphan containers (zookeeper1) for this project. If you removed or renamed this service in your compose file, you can run this command with the --remove-orphans flag to clean it up. Creating kafka1 ... done [root@vm192168303 kafka]# docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES c814a657f405 hyperledger/fabric-kafka "/docker-entrypoint.…" 29 seconds ago Up 28 seconds 0.0.0.0:9092->9092/tcp, 9093/tcp kafka1 f783cfca8fd7 hyperledger/fabric-zookeeper "/docker-entrypoint.…" 9 minutes ago Up 9 minutes 0.0.0.0:2181->2181/tcp, 0.0.0.0:2888->2888/tcp, 0.0.0.0:3888->3888/tcp zookeeper1 [root@vm192168303 kafka]# # # # [root@vm192168304 kafka]# docker-compose -f docker-compose-kafka2.yaml up -d WARNING: Found orphan containers (zookeeper2) for this project. If you removed or renamed this service in your compose file, you can run this command with the --remove-orphans flag to clean it up. Creating kafka2 ... done [root@vm192168304 kafka]# docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 8a73ae5e3491 hyperledger/fabric-kafka "/docker-entrypoint.…" 18 seconds ago Up 17 seconds 0.0.0.0:9092->9092/tcp, 9093/tcp kafka2 1b2f250a7e99 hyperledger/fabric-zookeeper "/docker-entrypoint.…" 9 minutes ago Up 9 minutes 0.0.0.0:2181->2181/tcp, 0.0.0.0:2888->2888/tcp, 0.0.0.0:3888->3888/tcp zookeeper2 [root@vm192168304 kafka]# # # # [root@vm192168305 kafka]# docker-compose -f docker-compose-kafka3.yaml up -d Creating kafka3 ... done [root@vm192168305 kafka]# docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES bdfab209f947 hyperledger/fabric-kafka "/docker-entrypoint.…" 7 seconds ago Up 5 seconds 0.0.0.0:9092->9092/tcp, 9093/tcp kafka3 [root@vm192168305 kafka]#

(3)orderer

docker-compose -f docker-compose-orderer0.yaml up -d

docker-compose -f docker-compose-orderer1.yaml up -d

docker-compose -f docker-compose-orderer2.yaml up -d

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 # # # [root@vm192168302 kafka]# docker-compose -f docker-compose-orderer0.yaml up -d WARNING: Found orphan containers (kafka0, zookeeper0) for this project. If you removed or renamed this service in your compose file, you can run this command with the --remove-orphans flag to clean it up. Creating orderer0.example.com ... done [root@vm192168302 kafka]# docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES e03212513d50 hyperledger/fabric-orderer "orderer" 45 seconds ago Up 44 seconds 0.0.0.0:7050->7050/tcp orderer0.example.com df819f7b3a4d hyperledger/fabric-kafka "/docker-entrypoint.…" 2 minutes ago Up 2 minutes 0.0.0.0:9092->9092/tcp, 9093/tcp kafka0 dceb298110c6 hyperledger/fabric-zookeeper "/docker-entrypoint.…" 4 minutes ago Up 4 minutes 0.0.0.0:2181->2181/tcp, 0.0.0.0:2888->2888/tcp, 0.0.0.0:3888->3888/tcp zookeeper0 [root@vm192168302 kafka]# # # # [root@vm192168303 kafka]# docker-compose -f docker-compose-orderer1.yaml up -d WARNING: Found orphan containers (kafka1, zookeeper1) for this project. If you removed or renamed this service in your compose file, you can run this command with the --remove-orphans flag to clean it up. Creating orderer1.example.com ... done [root@vm192168303 kafka]# docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 269933735d8e hyperledger/fabric-orderer "orderer" 27 seconds ago Up 26 seconds 0.0.0.0:7050->7050/tcp orderer1.example.com c814a657f405 hyperledger/fabric-kafka "/docker-entrypoint.…" 2 minutes ago Up 2 minutes 0.0.0.0:9092->9092/tcp, 9093/tcp kafka1 f783cfca8fd7 hyperledger/fabric-zookeeper "/docker-entrypoint.…" 11 minutes ago Up 11 minutes 0.0.0.0:2181->2181/tcp, 0.0.0.0:2888->2888/tcp, 0.0.0.0:3888->3888/tcp zookeeper1 [root@vm192168303 kafka]# # # # [root@vm192168304 kafka]# docker-compose -f docker-compose-orderer2.yaml up -d WARNING: Found orphan containers (kafka2, zookeeper2) for this project. If you removed or renamed this service in your compose file, you can run this command with the --remove-orphans flag to clean it up. Creating orderer2.example.com ... done [root@vm192168304 kafka]# docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 9383bda5a2ef hyperledger/fabric-orderer "orderer" 5 seconds ago Up 4 seconds 0.0.0.0:7050->7050/tcp orderer2.example.com 8a73ae5e3491 hyperledger/fabric-kafka "/docker-entrypoint.…" 2 minutes ago Up 2 minutes 0.0.0.0:9092->9092/tcp, 9093/tcp kafka2 1b2f250a7e99 hyperledger/fabric-zookeeper "/docker-entrypoint.…" 11 minutes ago Up 11 minutes 0.0.0.0:2181->2181/tcp, 0.0.0.0:2888->2888/tcp, 0.0.0.0:3888->3888/tcp zookeeper2 [root@vm192168304 kafka]#

(4)peer10-192.168.30.6

#####################################

##############启动容器

#####################################

// 启动容器

docker-compose -f docker-compose-peer10.yaml up -d

#####################################

##############创建并加入通道

#####################################

// 进入容器

docker exec -it cli bash

// 设置orderer0 TLS通信公钥的路径

ORDERER_CA=/opt/gopath/src/github.com/hyperledger/fabric/peer/crypto/ordererOrganizations/example.com/orderers/orderer0.example.com/msp/tlscacerts/tlsca.example.com-cert.pem

// 创建通道

peer channel create -o orderer0.example.com:7050 -c mychannel -f ./channel-artifacts/mychannel.tx –tls –cafile $ORDERER_CA

// 加入通道

peer channel join -b mychannel.block

#####################################

##############实例化智能合约

#####################################

// 安装智能合约

peer chaincode install -n mycc -p github.com/hyperledger/fabric/chaincode/chaincode_example02 -v 1.0

// 实例化智能合约

ORDERER_CA=/opt/gopath/src/github.com/hyperledger/fabric/peer/crypto/ordererOrganizations/example.com/orderers/orderer0.example.com/msp/tlscacerts/tlsca.example.com-cert.pem

peer chaincode instantiate -o orderer0.example.com:7050 –tls –cafile $ORDERER_CA -C mychannel -n mycc -v 1.0 -c ‘{“Args”:[“init”,”a”,”200”,”b”,”400”]}’ -P “OR (‘Org1MSP.peer’,’Org2MSP.peer’)”

// 查询a

peer chaincode query -C mychannel -n mycc -c ‘{“Args”:[“query”,”a”]}’

// 退出容器

ctrl+D

#####################################

##############复制通道文件

#####################################

// 从镜像复制到本地

docker cp cli:/opt/gopath/src/github.com/hyperledger/fabric/peer/mychannel.block ./

// 传输给另外3台peer

scp ./mychannel.block root@192.168.30.7 :/root/kafka

scp ./mychannel.block root@192.168.30.8 :/root/kafka

scp ./mychannel.block root@192.168.30.9 :/root/kafka

#####################################

##############查看chaincode容器

#####################################

docker ps

docker logs ee6761722c9c

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 [root@vm192168306 kafka]# docker-compose -f docker-compose-peer10.yaml up -d Creating peer0.org1.example.com ... done Creating cli ... done [root@vm192168306 kafka]# docker exec -it cli bash root@c46011557243:/opt/gopath/src/github.com/hyperledger/fabric/peer# ORDERER_CA=/opt/gopath/src/github.com/hyperledger/fabric/peer/crypto/ordererOrganizations/example.com/orderers/orderer0.example.com/msp/tlscacerts/tlsca.example.com-cert.pem root@c46011557243:/opt/gopath/src/github.com/hyperledger/fabric/peer# peer channel create -o orderer0.example.com:7050 -c mychannel -f ./channel-artifacts/mychannel.tx --tls --cafile $ORDERER_CA 2020-09-18 06:51:21.070 UTC [main] InitCmd -> WARN 001 CORE_LOGGING_LEVEL is no longer supported, please use the FABRIC_LOGGING_SPEC environment variable 2020-09-18 06:51:21.089 UTC [main] SetOrdererEnv -> WARN 002 CORE_LOGGING_LEVEL is no longer supported, please use the FABRIC_LOGGING_SPEC environment variable 2020-09-18 06:51:21.097 UTC [channelCmd] InitCmdFactory -> INFO 003 Endorser and orderer connections initialized 2020-09-18 06:51:21.154 UTC [cli.common] readBlock -> INFO 004 Got status: &{SERVICE_UNAVAILABLE} 2020-09-18 06:51:21.159 UTC [channelCmd] InitCmdFactory -> INFO 005 Endorser and orderer connections initialized 2020-09-18 06:51:21.361 UTC [cli.common] readBlock -> INFO 006 Got status: &{SERVICE_UNAVAILABLE} 2020-09-18 06:51:21.365 UTC [channelCmd] InitCmdFactory -> INFO 007 Endorser and orderer connections initialized 2020-09-18 06:51:21.568 UTC [cli.common] readBlock -> INFO 008 Received block: 0 root@c46011557243:/opt/gopath/src/github.com/hyperledger/fabric/peer# peer channel join -b mychannel.block 2020-09-18 06:51:28.777 UTC [main] InitCmd -> WARN 001 CORE_LOGGING_LEVEL is no longer supported, please use the FABRIC_LOGGING_SPEC environment variable 2020-09-18 06:51:28.794 UTC [main] SetOrdererEnv -> WARN 002 CORE_LOGGING_LEVEL is no longer supported, please use the FABRIC_LOGGING_SPEC environment variable 2020-09-18 06:51:28.800 UTC [channelCmd] InitCmdFactory -> INFO 003 Endorser and orderer connections initialized 2020-09-18 06:51:28.843 UTC [channelCmd] executeJoin -> INFO 004 Successfully submitted proposal to join channel root@c46011557243:/opt/gopath/src/github.com/hyperledger/fabric/peer# peer chaincode install -n mycc -p github.com/hyperledger/fabric/chaincode/chaincode_example02 -v 1.0 2020-09-18 06:51:38.701 UTC [main] InitCmd -> WARN 001 CORE_LOGGING_LEVEL is no longer supported, please use the FABRIC_LOGGING_SPEC environment variable 2020-09-18 06:51:38.718 UTC [main] SetOrdererEnv -> WARN 002 CORE_LOGGING_LEVEL is no longer supported, please use the FABRIC_LOGGING_SPEC environment variable 2020-09-18 06:51:38.725 UTC [chaincodeCmd] checkChaincodeCmdParams -> INFO 003 Using default escc 2020-09-18 06:51:38.725 UTC [chaincodeCmd] checkChaincodeCmdParams -> INFO 004 Using default vscc 2020-09-18 06:51:39.229 UTC [chaincodeCmd] install -> INFO 005 Installed remotely response:<status:200 payload:"OK" > root@c46011557243:/opt/gopath/src/github.com/hyperledger/fabric/peer# ORDERER_CA=/opt/gopath/src/github.com/hyperledger/fabric/peer/crypto/ordererOrganizations/example.com/orderers/orderer0.example.com/msp/tlscacerts/tlsca.example.com-cert.pem root@c46011557243:/opt/gopath/src/github.com/hyperledger/fabric/peer# peer chaincode instantiate -o orderer0.example.com:7050 --tls --cafile $ORDERER_CA -C mychannel -n mycc -v 1.0 -c '{"Args":["init","a","200","b","400"]}' -P "OR ('Org1MSP.peer','Org2MSP.peer')" 2020-09-18 06:51:58.054 UTC [main] InitCmd -> WARN 001 CORE_LOGGING_LEVEL is no longer supported, please use the FABRIC_LOGGING_SPEC environment variable 2020-09-18 06:51:58.071 UTC [main] SetOrdererEnv -> WARN 002 CORE_LOGGING_LEVEL is no longer supported, please use the FABRIC_LOGGING_SPEC environment variable 2020-09-18 06:51:58.085 UTC [chaincodeCmd] checkChaincodeCmdParams -> INFO 003 Using default escc 2020-09-18 06:51:58.085 UTC [chaincodeCmd] checkChaincodeCmdParams -> INFO 004 Using default vscc root@c46011557243:/opt/gopath/src/github.com/hyperledger/fabric/peer# peer chaincode query -C mychannel -n mycc -c '{"Args":["query","a"]}' 2020-09-18 06:52:07.416 UTC [main] InitCmd -> WARN 001 CORE_LOGGING_LEVEL is no longer supported, please use the FABRIC_LOGGING_SPEC environment variable 2020-09-18 06:52:07.434 UTC [main] SetOrdererEnv -> WARN 002 CORE_LOGGING_LEVEL is no longer supported, please use the FABRIC_LOGGING_SPEC environment variable 200 root@c46011557243:/opt/gopath/src/github.com/hyperledger/fabric/peer# exit [root@vm192168306 kafka]# ls chaincode docker-compose-kafka2.yaml docker-compose-peer20.yaml channel-artifacts docker-compose-kafka3.yaml docker-compose-peer21.yaml configtx.yaml docker-compose-orderer0.yaml docker-compose-zookeeper0.yaml crypto-config docker-compose-orderer1.yaml docker-compose-zookeeper1.yaml crypto-config.yaml docker-compose-orderer2.yaml docker-compose-zookeeper2.yaml docker-compose-kafka0.yaml docker-compose-peer10.yaml mychannel.block docker-compose-kafka1.yaml docker-compose-peer11.yaml [root@vm192168306 kafka]# scp ./mychannel.block root@192.168.30.7:/root/kafka root@192.168.30.7's password: ./mychannel.block: No such file or directory [root@vm192168306 kafka]# docker cp cli:/opt/gopath/src/github.com/hyperledger/fabric/peer/mychannel.block ./ [root@vm192168306 kafka]# scp ./mychannel.block root@192.168.30.7:/root/kafka root@192.168.30.7's password: mychannel.block 100% 15KB 9.9MB/s 00:00 [root@vm192168306 kafka]# scp ./mychannel.block root@192.168.30.8:/root/kafka root@192.168.30.8's password: mychannel.block 100% 15KB 6.4MB/s 00:00 [root@vm192168306 kafka]# scp ./mychannel.block root@192.168.30.9:/root/kafka root@192.168.30.9's password: mychannel.block 100% 15KB 6.9MB/s 00:00 [root@vm192168306 kafka]# docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES d922efbec000 dev-peer0.org1.example.com-mycc-1.0-384f11f484b9302df90b453200cfb25174305fce8f53f4e94d45ee3b6cab0ce9 "chaincode -peer.add…" 11 minutes ago Up 11 minutes dev-peer0.org1.example.com-mycc-1.0 c46011557243 hyperledger/fabric-tools "/bin/bash" 12 minutes ago Up 12 minutes cli 7ab0090cdf13 hyperledger/fabric-peer "peer node start" 12 minutes ago Up 12 minutes 0.0.0.0:7051-7053->7051-7053/tcp peer0.org1.example.com [root@vm192168306 kafka]# docker logs d922efbec000 ex02 Init Aval = 200, Bval = 400 ex02 Invoke Query Response:{"Name":"a","Amount":"200"} [root@vm192168306 kafka]#

(5)peer11-192.168.30.7

#####################################

##############启动容器

#####################################

docker-compose -f docker-compose-peer11.yaml up -d

#####################################

##############加入通道

#####################################

// 复制通道文件到容器

docker cp /root/kafka/mychannel.block cli:/opt/gopath/src/github.com/hyperledger/fabric/peer/

// 进入客户端

docker exec -it cli bash

// 加入通道

peer channel join -b mychannel.block

#####################################

##############安装链码

#####################################

// 安装链码

peer chaincode install -n mycc -p github.com/hyperledger/fabric/chaincode/chaincode_example02 -v 1.0

// 调用链码查询“a”的值

peer chaincode query -C mychannel -n mycc -c ‘{“Args”:[“query”,”a”]}’

// orderer节点的TLS通信公钥

ORDERER_CA=/opt/gopath/src/github.com/hyperledger/fabric/peer/crypto/ordererOrganizations/example.com/orderers/orderer0.example.com/msp/tlscacerts/tlsca.example.com-cert.pem

// 调用链码进行转账

peer chaincode invoke –tls –cafile $ORDERER_CA -C mychannel -n mycc -c ‘{“Args”:[“invoke”,”a”,”b”,”20”]}’

ORDERER_CA=/opt/gopath/src/github.com/hyperledger/fabric/peer/crypto/ordererOrganizations/example.com/orderers/orderer0.example.com/msp/tlscacerts/tlsca.example.com-cert.pem

peer chaincode invoke –tls –cafile $ORDERER_CA -C mychannel -n mycc -c ‘{“Args”:[“invoke”,”b”,”a”,”20”]}’

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 [root@vm192168307 kafka]# docker-compose -f docker-compose-peer11.yaml up -d Creating cli ... done Creating peer1.org1.example.com ... done [root@vm192168307 kafka]# docker cp /root/kafka/mychannel.block cli:/opt/gopath/src/github.com/hyperledger/fabric/peer/ [root@vm192168307 kafka]# docker exec -it cli bash root@f66402657c83:/opt/gopath/src/github.com/hyperledger/fabric/peer# peer channel join -b mychannel.block 2020-09-18 06:54:52.196 UTC [main] InitCmd -> WARN 001 CORE_LOGGING_LEVEL is no longer supported, please use the FABRIC_LOGGING_SPEC environment variable 2020-09-18 06:54:52.215 UTC [main] SetOrdererEnv -> WARN 002 CORE_LOGGING_LEVEL is no longer supported, please use the FABRIC_LOGGING_SPEC environment variable 2020-09-18 06:54:52.222 UTC [channelCmd] InitCmdFactory -> INFO 003 Endorser and orderer connections initialized 2020-09-18 06:54:52.261 UTC [channelCmd] executeJoin -> INFO 004 Successfully submitted proposal to join channel root@f66402657c83:/opt/gopath/src/github.com/hyperledger/fabric/peer# peer chaincode install -n mycc -p github.com/hyperledger/fabric/chaincode/chaincode_example02 -v 1.0 2020-09-18 06:55:00.693 UTC [main] InitCmd -> WARN 001 CORE_LOGGING_LEVEL is no longer supported, please use the FABRIC_LOGGING_SPEC environment variable 2020-09-18 06:55:00.711 UTC [main] SetOrdererEnv -> WARN 002 CORE_LOGGING_LEVEL is no longer supported, please use the FABRIC_LOGGING_SPEC environment variable 2020-09-18 06:55:00.717 UTC [chaincodeCmd] checkChaincodeCmdParams -> INFO 003 Using default escc 2020-09-18 06:55:00.717 UTC [chaincodeCmd] checkChaincodeCmdParams -> INFO 004 Using default vscc 2020-09-18 06:55:01.253 UTC [chaincodeCmd] install -> INFO 005 Installed remotely response:<status:200 payload:"OK" > root@f66402657c83:/opt/gopath/src/github.com/hyperledger/fabric/peer# peer chaincode query -C mychannel -n mycc -c '{"Args":["query","a"]}' 2020-09-18 06:55:09.809 UTC [main] InitCmd -> WARN 001 CORE_LOGGING_LEVEL is no longer supported, please use the FABRIC_LOGGING_SPEC environment variable 2020-09-18 06:55:09.826 UTC [main] SetOrdererEnv -> WARN 002 CORE_LOGGING_LEVEL is no longer supported, please use the FABRIC_LOGGING_SPEC environment variable 200 root@f66402657c83:/opt/gopath/src/github.com/hyperledger/fabric/peer# ORDERER_CA=/opt/gopath/src/github.com/hyperledger/fabric/peer/crypto/ordererOrganizations/example.com/orderers/orderer0.example.com/msp/tlscacerts/tlsca.example.com-cert.pem root@f66402657c83:/opt/gopath/src/github.com/hyperledger/fabric/peer# peer chaincode invoke --tls --cafile $ORDERER_CA -C mychannel -n mycc -c '{"Args":["invoke","a","b","20"]}' 2020-09-18 06:55:48.139 UTC [main] InitCmd -> WARN 001 CORE_LOGGING_LEVEL is no longer supported, please use the FABRIC_LOGGING_SPEC environment variable 2020-09-18 06:55:48.156 UTC [main] SetOrdererEnv -> WARN 002 CORE_LOGGING_LEVEL is no longer supported, please use the FABRIC_LOGGING_SPEC environment variable 2020-09-18 06:55:48.177 UTC [chaincodeCmd] InitCmdFactory -> INFO 003 Retrieved channel (mychannel) orderer endpoint: orderer0.example.com:7050 2020-09-18 06:55:48.202 UTC [chaincodeCmd] chaincodeInvokeOrQuery -> INFO 004 Chaincode invoke successful. result: status:200 root@f66402657c83:/opt/gopath/src/github.com/hyperledger/fabric/peer# peer chaincode query -C mychannel -n mycc -c '{"Args":["query","a"]}' 2020-09-18 06:55:51.621 UTC [main] InitCmd -> WARN 001 CORE_LOGGING_LEVEL is no longer supported, please use the FABRIC_LOGGING_SPEC environment variable 2020-09-18 06:55:51.638 UTC [main] SetOrdererEnv -> WARN 002 CORE_LOGGING_LEVEL is no longer supported, please use the FABRIC_LOGGING_SPEC environment variable 180 root@f66402657c83:/opt/gopath/src/github.com/hyperledger/fabric/peer# exit

(6)peer20-192.168.30.8

#####################################

##############启动容器

#####################################

docker-compose -f docker-compose-peer20.yaml up -d

#####################################

##############加入通道

#####################################

// 复制通道文件到容器

docker cp /root/kafka/mychannel.block cli:/opt/gopath/src/github.com/hyperledger/fabric/peer/

// 进入客户端

docker exec -it cli bash

// 加入通道

peer channel join -b mychannel.block

#####################################

##############安装链码

#####################################

// 安装链码

peer chaincode install -n mycc -p github.com/hyperledger/fabric/chaincode/chaincode_example02 -v 1.0

// Orderer节点TLS通信公钥